The Missing Layer in AI Systems

Why modern AI behaves unpredictably and existing infrastructure cannot control it

This is Part II of a three-part series examining how modern AI systems are interpreted and where those interpretations break down. Part I, here, identified a structural gap between how systems are controlled and how their outputs are allowed to influence system state. Part II focuses on how that gap manifests in practice. The issue is not a collection of isolated failures, but a recurring pattern that emerges across different systems and use cases. Understanding that pattern is necessary before identifying what is missing and what must change.

Part 2 — The Failure Mode

When Performance Becomes Both Means and End

In Part I, the argument was straightforward. Modern infrastructure is highly effective at controlling execution, access, and scaling. It can determine where code runs, who can invoke it, and how systems respond under load. What it does not govern is what outputs become after they are generated. Outputs are no longer terminal artifacts. They are stored, retrieved, reused as context, and in many cases incorporated into subsequent actions. As a result, systems are no longer shaped only by their inputs and code paths, but by the accumulation and reuse of their own outputs over time.

Once outputs begin to circulate in this way, interpretation is no longer a passive activity. Outputs are not simply read and discarded. They are taken up, reused, and in some cases treated as if they were reliable inputs into further computation. This introduces a structural condition in which meaning accumulates without explicit qualification. What the system produces begins to influence what the system does next, even when the basis for that influence has not been validated.

The pattern that follows is consistent. A system produces a strong result under a particular set of conditions. That result is then generalized beyond those conditions. The generalization is interpreted as a broader capability, and that capability is treated as evidence of a more stable or comprehensive property of the system. Each step is understandable on its own, but the transitions between them are rarely examined. The result is that performance in a constrained setting is extended into claims about what the system can do more generally.

The error does not lie in the performance itself. It lies in how that performance is interpreted. Outputs are treated as indicators of what the system is, rather than as instances of what the system produced under specific conditions. This distinction is often implicit, but it is critical. When it is not maintained, systems appear more coherent, more stable, and more capable than they are in practice.

This matters because these interpretations do not remain theoretical. They shape how systems are used, how they are integrated, and how their outputs are trusted. Once a system is treated as reliable beyond its demonstrated conditions, its outputs begin to carry weight in contexts where they have not been validated. At that point, the consequences of misinterpretation extend beyond individual outputs and begin to affect system behavior over time.

This is the first part of the failure mode. It begins with how outputs are read. It does not end there. As these interpretations are reused and propagated through systems, they begin to influence what systems do next.

How Errors Propagate

The error described in the previous section does not remain confined to how an output is interpreted in a single moment. Once outputs are reused within a system, misinterpretation becomes part of the system’s behavior. What begins as a reading error becomes embedded in how the system operates over time.

This shift occurs because outputs are no longer isolated responses. They are stored, retrieved, and reintroduced into future interactions. Systems increasingly rely on memory, retrieval mechanisms, and accumulated context to generate subsequent outputs. When an output is reused in this way, it no longer functions as an observation. It becomes an input. That change in role is consequential, because inputs influence what the system does next, regardless of whether they were originally interpreted correctly.

As these outputs move through a system, they pass across multiple layers. They may be written into memory, indexed for retrieval, incorporated into prompts, or passed through tool chains and connected services. At each stage, the distance from the original conditions under which the output was generated increases. The context in which the output was valid is not preserved with the same fidelity. What remains is the artifact itself, now detached from the constraints that shaped it.

Over time, this process produces accumulation. Reused outputs influence future outputs, which are then reused again. Small inaccuracies or misinterpretations are not necessarily corrected. Instead, they can be reinforced. The system begins to reflect its own prior artifacts, and its effective state shifts as a result. This is not the result of a single failure. It is the outcome of repeated reuse without qualification.

The same pathways that allow reuse also allow external content to enter the system. Retrieved documents, tool outputs, and user-provided inputs may contain incorrect or adversarial information. Once incorporated and persisted, these elements can reappear in later interactions. When they do, they are often treated as valid context, even when their origin or reliability is uncertain. The distinction between internally generated content and externally introduced content becomes less clear as both are integrated into the same representational space.

The consequences become more pronounced when outputs are used to trigger actions. Systems are increasingly connected to tools, external services, and workflows that allow them to do more than produce text. They can call APIs, modify data, initiate processes, and affect external state. When outputs are used in this way, the nature of the error changes. It is no longer limited to what the system says. It extends to what the system does. At that point, an unqualified output can result in a durable effect.

These dynamics are not always visible. Individual outputs often appear reasonable when evaluated in isolation. The effects of propagation occur across multiple steps and over extended periods. As a result, those who design and closely interact with such systems are more likely to observe inconsistencies and instability, while the broader user base may not immediately recognize the pattern. This gap in visibility allows the process to continue without being clearly identified as a structural issue.

The result is a system in which outputs are not only generated, but circulated, accumulated, and acted upon. Misinterpretation does not remain an interpretive error. It becomes embedded in system behavior. At that point, the question is no longer how outputs are read, but how they move through a system and what they influence along the way.

When Outputs Become Actions

The previous sections established a progression. Outputs are first misinterpreted, then reused, and then propagated across systems. The remaining step is where those outputs begin to produce effects. At that point, the system is no longer only generating responses. It is participating in processes that change its own state or the state of external systems.

When outputs are used to call tools, trigger workflows, or modify data, they are no longer treated as provisional. They function as inputs into execution. This transition occurs without a consistent mechanism that determines whether an output should be acted on in the first place. Systems verify that an action can be executed. They check permissions, formats, and access conditions. What they do not evaluate is whether the output that initiated the action is appropriate to act on under the circumstances in which it was generated.

This absence becomes visible through the types of consequences that follow. An output that appears reasonable in isolation can initiate a process that produces unintended results. Data may be modified based on an incorrect assumption. A workflow may proceed on the basis of an unverified interpretation. External systems may be affected in ways that are not aligned with the original intent of the interaction. These outcomes are not the result of a single failure at the point of generation. They arise from the absence of a mechanism that governs the transition from output to action.

In a common consumer setting, this can take a simple form. A system assists with scheduling and proposes a meeting time based on prior context. That context changes, but the earlier output is stored and reused. When the system later acts on that information, it schedules or confirms a time that no longer reflects current conditions. The initial output was reasonable when produced. The error emerges only after it is reused and acted upon without requalification.

Some of these effects persist. Once an output has been incorporated into memory, written to a database, or used to update system state, it can influence future behavior. Subsequent outputs may be conditioned on that state, and the system may continue to operate on the basis of an earlier, unqualified step. In these cases, the consequences are not limited to a single action. They extend across time and shape the system’s ongoing operation.

In more structured environments, the same pattern appears in a different form. A model interprets financial data and produces a recommendation that is treated as sufficiently reliable for downstream use. That recommendation is incorporated into a workflow or decision process, and subsequent steps rely on it as if it were a stable input. If the initial interpretation was incomplete or context-dependent, the system can continue to operate on that assumption, compounding the effect across multiple steps. The issue is not the presence of a single incorrect output, but the accumulation of unqualified state that influences later decisions.

The impact is amplified by the structure of modern systems. Outputs can move quickly across components, services, and environments. A single unqualified step can propagate through multiple layers, affecting processes that were not directly connected to the original interaction. As systems become more interconnected, the scope of these effects increases, and the relationship between the initial output and its eventual consequences becomes more difficult to trace.

Existing infrastructure does not address this transition. It is designed to ensure that actions are executed correctly, not that they are initiated appropriately. Access control determines who can act. Orchestration determines how actions are carried out. Policy systems define allowable operations. None of these mechanisms evaluate whether a generated output should become an action within the system.

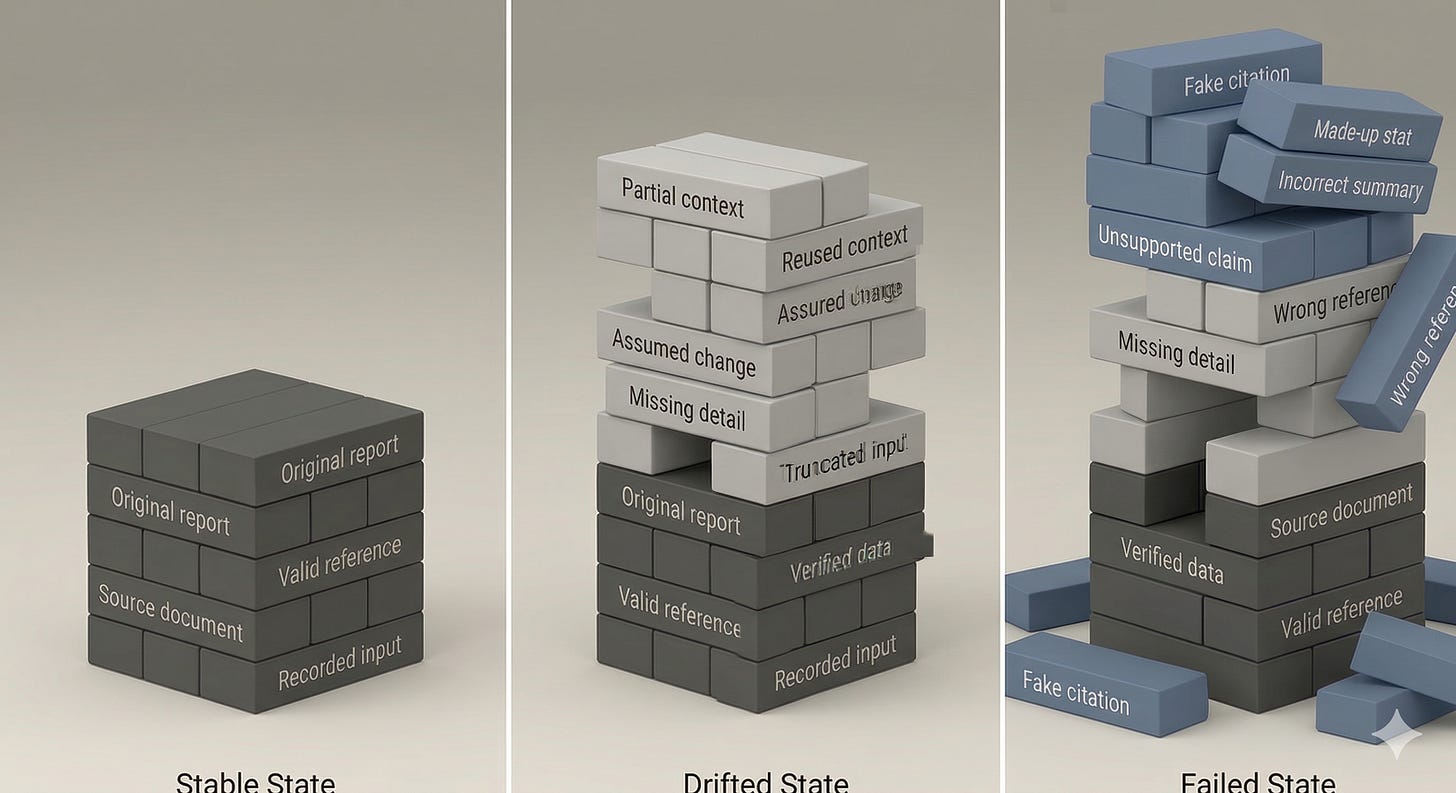

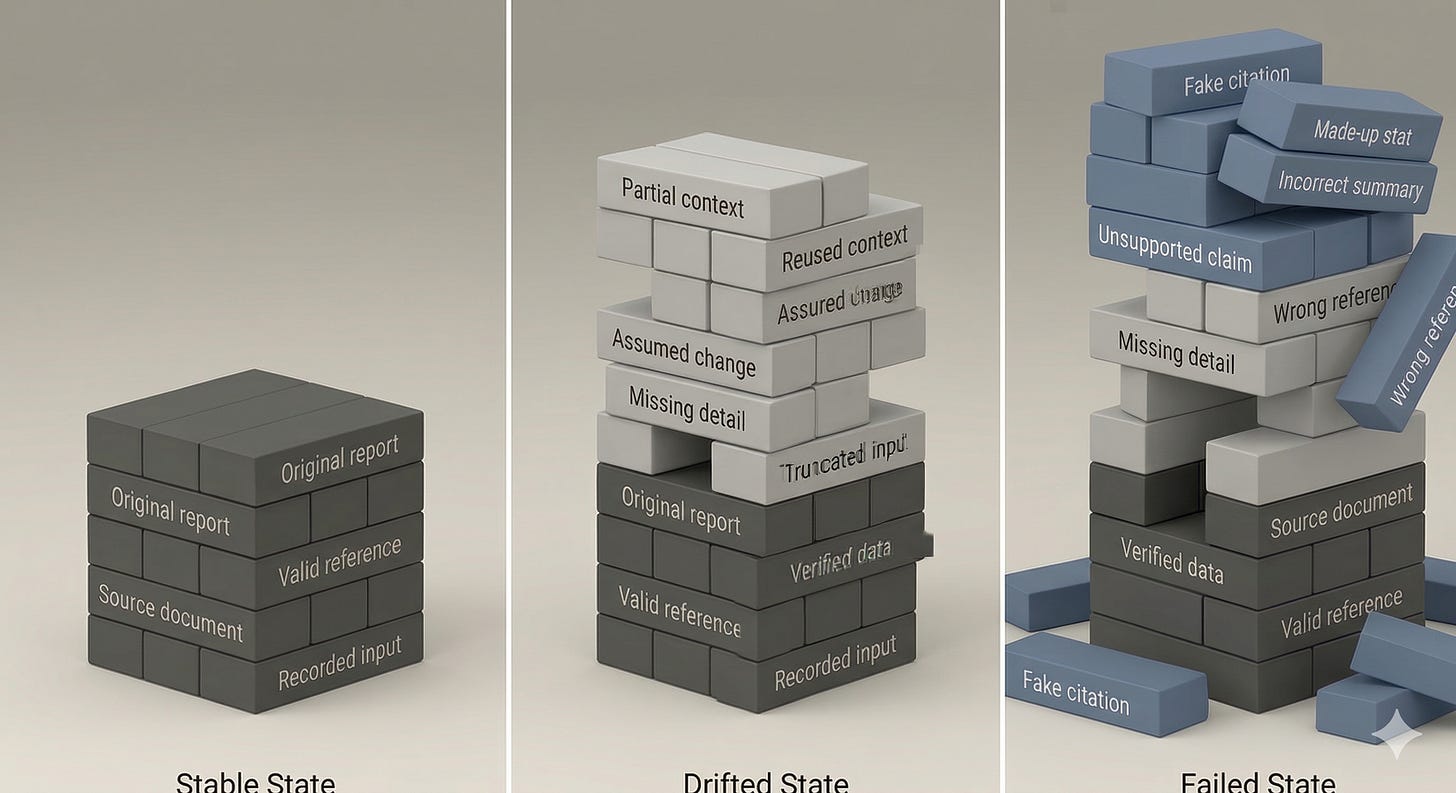

Figure 1 provides a schematic illustration of the transition described throughout Part II using a corporate reporting context. The example centers on a strategic corporate report, such as a quarterly performance or risk assessment document, where initial inputs are verified and well-formed. As the report is iteratively expanded, summarized, and incorporated into subsequent workflows, intermediate outputs begin to reflect partial context, reused assumptions, and missing detail. These intermediate states remain operational within the system and are treated as stable inputs in later steps. Over time, this accumulation produces outputs that appear coherent but are no longer grounded in the original source material. The figure visualizes this progression from stable input to drifted interpretation and, ultimately, to failed state, where unqualified outputs are propagated and acted upon.

Looking Ahead

Part I established the structural gap between how systems are controlled and how their outputs are allowed to influence system state. Part II has examined how that gap manifests in practice. The pattern is consistent. Outputs are overread, propagated across system boundaries, and incorporated into actions without a mechanism that evaluates whether they should be treated as operational.

What emerges from this is not a failure of any single component, but a misalignment between how systems generate information and how that information is allowed to shape behavior over time. Outputs are no longer confined to the moment in which they are produced. They persist, circulate, and, under certain conditions, acquire a role they were never explicitly designed to hold.

The question that follows is therefore not limited to output quality or execution correctness. It is structural. If outputs can move from observation to action without qualification, then the system lacks a point at which that transition is governed. Understanding that absence, and what it implies for system design, is the focus of Part III.

I totally get this and where you are going with this. I have been working with people on a addressing this problem. It seems some smart people think that some simple guardrails inside an agent are all you need to fix the problem. I agree that those are necessary, but not sufficient.

For simple Agentic flows, simply resetting the context and controlling things like temperature cam give you almost deterministic results. It's just that just like humans, the agent may deal with novel situations in novel ways. The process and resources and agent uses as well as the output needs to be verified in some way. Spot checking and auditing might catch some things, but these problems often happen at the perephery and infrequently. It's not really a huge problem now, but it isn't hard to imagine that it will be in the future.